Meta has printed its newest Neighborhood Requirements Enforcement Report, which outlines all the content material that it took motion on in Q2, in addition to its Broadly Seen Content material replace, which supplies a glimpse of what was gaining traction amongst Fb customers within the interval.

Neither of which reveals any main shifts, although the developments listed below are price noting, particularly within the context of Meta’s change in coverage enforcement to a mannequin extra consistent with the expectations of the Trump Administration.

Which Meta makes particular notice of within the introduction of its Neighborhood Requirements replace:

“In January, we introduced a sequence of steps to permit for extra speech whereas working to make fewer errors, and our final quarterly report highlighted how we lower errors in half. The newest report continues to mirror this progress. Since we started our efforts to scale back over-enforcement, we’ve lower enforcement errors within the U.S. by greater than 75% on a weekly foundation.”

Which sounds good, proper? Fewer enforcement errors is clearly a great factor, because it implies that individuals aren’t being incorrectly penalized for his or her posts.

However it’s all relative. In case you scale back enforcement total, you’re inevitably going to see fewer errors, however that additionally implies that extra dangerous content material might be getting by way of due to these decrease total thresholds.

So is that what’s occurring in Meta’s case?

Effectively…

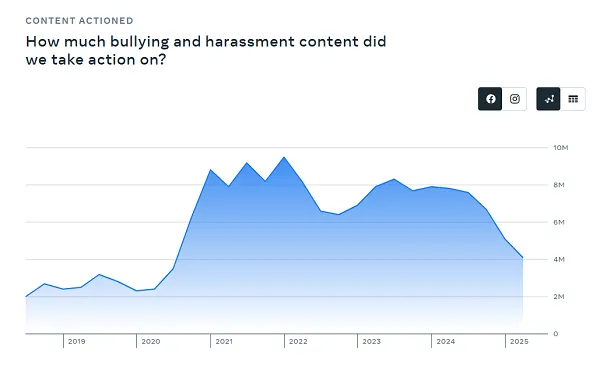

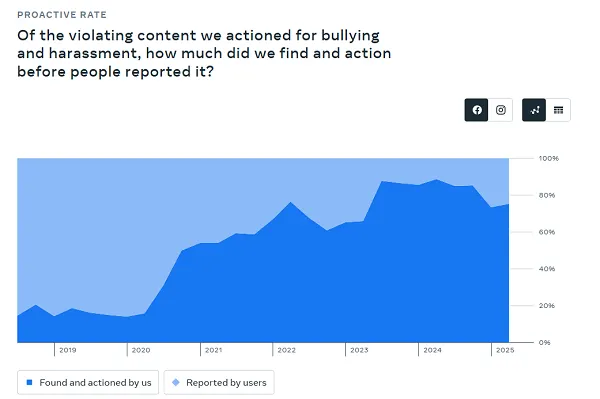

One key measure right here can be “Bullying and Harassment,” and the charges at which Meta is imposing such below these revised insurance policies.

And occurring the info developments, Meta is clearly taking much less motion on this entrance:

That may be a precipitous drop in studies, whereas the expanded knowledge right here additionally reveals that Meta’s not detecting as a lot earlier than customers report it.

So that might counsel that Meta’s doing worse on this entrance, although false positives can be much less.

This completely demonstrates how this can be a deceptive abstract, as a result of diminished enforcement means extra hurt, primarily based on person studies versus proactive enforcement.

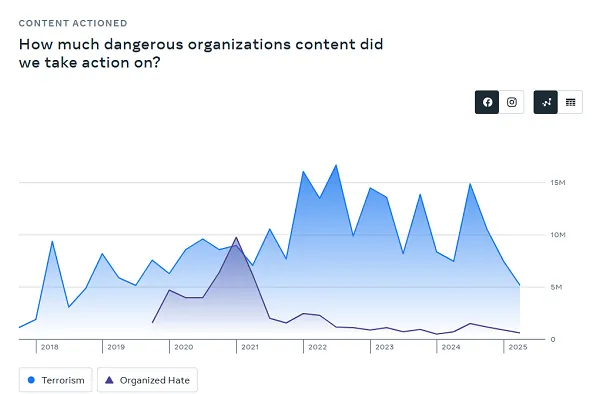

Meta’s additionally taking much less motion on “Harmful Organizations,” which pertains to terror and hate speech content material.

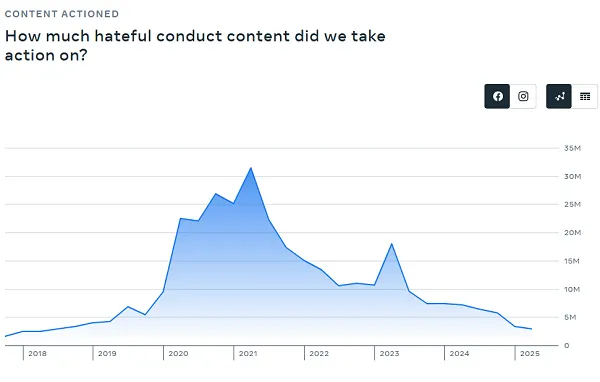

In addition to “Hateful Content material” particularly:

So you may see from the developments that Meta’s shift in strategy is seeing much less enforcement in a number of key areas of concern. However fewer incorrect studies. That’s a great factor, proper?

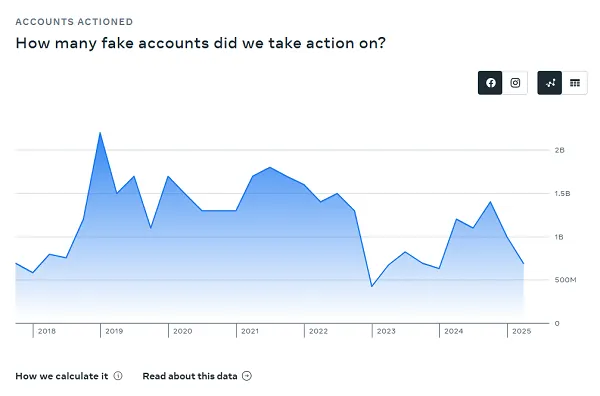

Meta’s additionally eradicating fewer faux accounts, although it has pegged its faux account presence at 4% of its total viewers rely.

For a very long time, Meta pegged this quantity at 5%, however in its final two studies, it is truly diminished that prevalence determine. For context, in its Q1 report, it famous that 3% of its worldwide month-to-month lively customers on Fb had been fakes. So its detection is getting higher, however worse than final time.

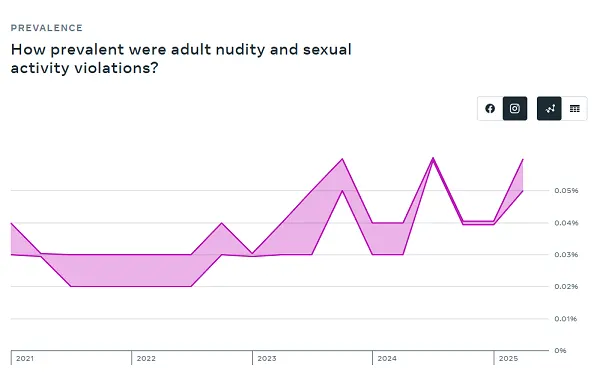

Meta additionally says that this improve…

…is a little bit of a misnomer, as a result of the rise in detections truly pertains to improved measurement of nudity and sexual exercise violations, not an precise improve in views of such.

Although this:

Nonetheless looks as if a priority.

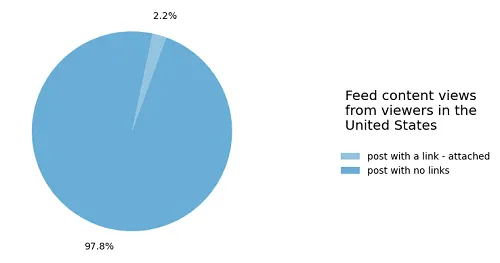

Additionally, extra unhealthy information for these seeking to drive referral hyperlinks from Fb:

“97.8% of the views within the US throughout Q2 2025 didn’t embody a hyperlink to a supply exterior of Fb. For the two.2% of views in posts that did embody a hyperlink, they sometimes got here from a Web page the individual adopted (this contains posts which can even have had photographs and movies, along with hyperlinks).”

And regardless of Meta transferring to permit extra political dialogue in its apps, that link-sharing determine is definitely getting worse, with Meta reporting that hyperlink posts made up 2.7% of total views in Q1.

So not numerous hyperlink posts getting numerous views on stability.

Essentially the most shared considered posts on Fb in Q2 had been comprised of the same old mixture of topical information and sideshow oddities, together with updates concerning the dying of Pope Francis, a brawl at a Chuck E. Cheese restaurant, rappers pledging to purchase grills for a neighborhood soccer group in the event that they win a championship, a Texas girl dying of a “mind consuming amoeba” and Emma Stone avoiding a “bee assault” at a crimson carpet premiere.

So Fb stays a mish-mash of reports content material, in addition to grocery store tabloid garbage, although no weird AI generations this time round (notice: two of the most-viewed posts had been now not accessible).

Meta has additionally shared its Oversight Board’s 2024 annual report, which reveals how its unbiased evaluation panel helped to form Meta coverage all year long.

As per the report:

“Since January 2021, we have now made greater than 300 suggestions to Meta. Implementation or progress on 74% of those has resulted in better transparency, clear and accessible guidelines, improved equity for customers and better consideration of Meta’s human rights duties, together with respect for freedom of expression.”

Which is a optimistic, exhibiting that Meta is seeking to evolve its insurance policies consistent with important evaluation of its guidelines.

Although its broader coverage shifts associated to better speech freedoms will stay the important thing focus, for the following three years at the very least, as Meta seems to be to raised align with the requests of the U.S. authorities.